It is often said that if you do not want your information to be stolen, don’t put it on the Internet. However, the Internet has become an integral part of our lives, and we can’t help but post some kind of web site, blog, or forum. Even if you don’t tell anyone about your web site, once it is published it will eventually be discovered.

It is often said that if you do not want your information to be stolen, don’t put it on the Internet. However, the Internet has become an integral part of our lives, and we can’t help but post some kind of web site, blog, or forum. Even if you don’t tell anyone about your web site, once it is published it will eventually be discovered.

How, you ask? By robot indexing programs, A.K.A. bots, crawlers and spiders. These little programs swarm out onto the Internet looking up every web site, caching and logging web site information in their databases. Often created by search engines to help index pages, they roam the Internet freely crawling all web sites all the time.

Normally this is an acceptable part of the Internet, but some search engines are so aggressive that they can increase bandwidth consumption. And some bots are malicious, stealing photos from web sites or harvesting email addresses so that they can be spammed. The simplest way to block these bots is to create a simple robots.txt file that contains instructions to block the bots:

User-agent: *

Disallow: /

However, there are a couple of things wrong with this approach. One is that bots can still hit the site, ignoring your robots.txt file and your wish not to be indexed.

But there is good news. If you are on an IIS 7 server, you have another alternative. You can use the RequestFiltering Rule that is built-in to IIS 7. It works on a higher level portion of the web service and it cannot be bypassed by a bot.

The setup is fairly simple, and the easiest and fastest way to initiate your ReqestFiltering Rule is to code it in your application’s web.config file. The RequestFiltering element goes inside the <system.webServer><security> elements. If you do not have this in your applications web.config file you should be able to create them. Once that is created type this schema to setup your RequestFiltering rule.

<requestFiltering> <filteringRules> <filteringRule name="BlockSearchEngines" scanUrl="false" scanQueryString="false"> <scanHeaders> <clear /> <add requestHeader="User-Agent" /> </scanHeaders> <appliesTo> <clear /> </appliesTo> <denyStrings> <clear /> <add string="YandexBot" /> </denyStrings> </filteringRule> </filteringRules> </requestFiltering> <authentication> <basicAuthentication enabled="true" /> <anonymousAuthentication enabled="true" /> </authentication>

You can name the filtering rule whatever you’d like and in the “requestHeader” element you will need to make sure you define “User-Agent.” Within the “add string” element you’ll need to specify the User Agent name. In this example I set it to YandexBot which blocks a search engine originating from Russia. You can also block search engines such as Googlebot or Bingbot.

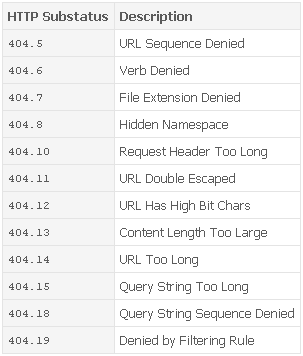

If you want to see if this rule is actually blocking these bots, you will need to download your HTTP raw logs from the server and parse them to look for the headers User-Agent. If you scroll to the left and find the headers SC-Status (status code) you should see a 404 HTTP response. In addition the headers will also carry sc-substatus which will be a substatus code to the primary HTTP response code.

Here is a list of potential substatus codes you may see when you impose your RequestFiltering rule.

Hi Raymond,

Great tidbit!

How would i though stop google indexing any links via a hosting services temporary subdomain that my site was on?

My site had a temporary domain mysite.web34.hosts.co.za

which i now have a proper domain name for.

The only way Google can find the alternate URL is if you link to it somewhere. What we find sometimes is people use it while they are developing their site and then when they launch they overlook a link somewhere to the alternate URL, so Google picks it up.

If you make sure no such links are out there, Google won’t find it. If there are links out there and you remove them, it’s a little more difficult to get those URLs removed.

Unfortunately there isn’t (yet) any way for us to provide a test bed for customer accounts that can be deleted, so we (and everyone else out there) use the alternate URLs.

What should be the option if I want to block all spider/bots.

My site is under testing.

is there any way like (.*) or (*) to block all spiders.

User-agent: *

Disallow: /

yes, this is fine for robots.txt file. I was asking about request filtering section.

because robots.txt file doesn’t block spiders 100%. So, what will be the section:

above only blocks yandexBot but I have to block all.

Unfortunately there are no settings on the RequestFiltering that will block all search engines. That’s because RequestFiltering does not block the specific activity a spider will perform but by the UserAgent name it will be logging with. In all honesty I don’t think there is anything that can block the indexing all search engine will perform. Not on the application level, neither on the server or network level. So unfortunately unless you take your site off-line where it cannot connect to the Internet, you will need to define the specific search engine one at a time. Keep in mind that some may actually change around there UserAgent name depending from the location they are coming from. Try looking at this UserAgent list. http://www.user-agents.org/

Hi Raymond,

thanks for the reply. This is really interesting information that we can’t block spiders 100%.

I thought that I can block all spiders using robot.txt file & nofolow meta tags.

again thanks for this valuable info.

Technically robots.txt is really not blocking spiders. It’s simply a statement to search engines indexing your site that you do not want to be indexed. But they can simply ignore it and keep indexing your site, that is why robots.txt will really not block spiders 100%.

Hi

I just added this to my website tooled for libwww-perl and finally passed that part of a SEO test. My site had trouble with this part of the code:

So I ended up commenting it out. Will that have any unwanted effects?

I’m very much a novice in these matters and because I didn’t have access to the IIS directly, but only write access to the web.config, I had a lot of trouble finding a solution to this problem. Thank you a lot, I’ve been googling this for like 3-4 hours 😀

Whoops, I didn’t use the right tag for posting code I think.

The part of code my site had trouble with (it crashed) was everything in and including the authentication-tag, but specifically anonymousAuthentication=true.